40 more ‘intelligence’ genes found

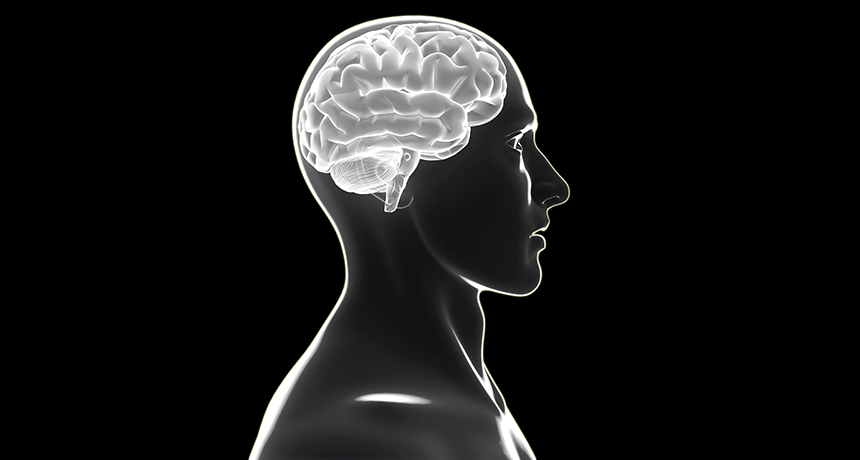

Smarty-pants have 40 new reasons to thank their parents for their powerful brains. By sifting through the genetics of nearly 80,000 people, researchers have uncovered 40 genes that may make certain people smarter. That brings the total number of suspected “intelligence genes” to 52.

Combined, these genetic attributes explain only a very small amount of overall smarts, or lack thereof, researchers write online May 22 in Nature Genetics. But studying these genes, many of which play roles in brain cell development, may ultimately help scientists understand how intelligence is built into brains.

Historically, intelligence research has been mired in controversy, says neuroscientist Richard Haier of the University of California, Irvine. Scientists disagreed on whether intelligence could actually be measured and if so, whether genes had anything at all to do with the trait, as opposed to education and other life experiences. But now “we are so many light-years beyond that, as you can see from studies like this,” says Haier. “This is very exciting and very positive news.”

The results were possible only because of the gigantic number of people studied, says study coauthor Danielle Posthuma, a geneticist at VU University Amsterdam. She and colleagues combined data from 13 earlier studies on intelligence, some published and some unpublished. Posthuma and her team looked for links between intelligence scores, measured in different ways in the studies, and variations held in the genetic instruction books of 78,308 children and adults. Called a genome-wide association study or GWAS, the method looks for signs that certain quirks in people’s genomes are related to a trait.

This technique pointed out particular versions of 22 genes, half of which were not previously known to have a role in intellectual ability. A different technique identified 30 more intelligence genes, only one of which had been previously found. Many of the 40 genes newly linked to intelligence are thought to help with brain cell development. The SHANK3 gene, for instance, helps nerve cells connect to partners.

Together, the genetic variants identified in the GWAS account for only about 5 percent of individual differences in intelligence, the authors estimate. That means that the results, if confirmed, would explain only a very small part of why some people are more intelligent than others. The gene versions identified in the paper are “accounting for so little of the variance that they’re not telling us much of anything,” says differential developmental psychologist Wendy Johnson of the University of Edinburgh.

Still, knowing more about the genetics of intelligence might ultimately point out ways to enhance the trait, an upgrade that would help people at both the high and low ends of the curve, Haier says. “If we understand what goes wrong in the brain, we might be able to intervene,” he says. “Wouldn’t it be nice if we were all just a little bit smarter?”

Posthuma, however, sees many roadblocks. Beyond ethical and technical concerns, basic brain biology is incredibly intricate. Single genes have many jobs. So changing one gene might have many unanticipated effects. Before scientists could change genes to increase intelligence, they’d need to know everything about the entire process, Posthuma says. Tweaking genetics to boost intelligence “would be very tricky.”